Moving from the historical roots of Cognitive Science, and considering its present status, I argue that it is not possible to find a single object or method that allows to unify various perspectives into a single disciplinary perspective. Thus, I consider the plural expression “cognitive sciences” more appropriate than the singular one, unless a framework for understanding multidisciplinary collaboration is found. I then briefly describe a meta-theoretic system, suggesting how cooperation between cognitive disciplines may have a true explanatory value. In this system, a single commonsense “fact” is described as a different “state” from the perspective of different disciplines (as a physical state, or a state of the body, of the brain, of consciousness, etc.). Such descriptions include new states resulting from changes of state (“events”), disposed along a time sequence (called “flow”). A parallel representation of different flows, describing from various disciplinary standpoints the same events occurring in a certain time course (called a “flow-chain”), allows to establish the nature of correspondences and links between events in the same or different flows. I argue that a multidisciplinary exchange is really needed for explanation when a cognitive phenomenon includes events that are correlated but cannot be causally linked inside a single flow, i.e. using a set of descriptions belonging to a single discipline.

1. Origins of “Cognitive Science”

The expression “Cognitive Science” was firstly used by Christopher Longuet-Higgins (1973), a scholar who moved from Chemistry and Theoretical Physics to Artificial Intelligence (AI). In 1967 he founded in Edinburgh a Machine Intelligence and Perception Department, where he personally pursued the study of artificial vision, and created a group of psychologists, linguists, and neuroscientists on interdisciplinary projects. He considered AI a sort of “theoretical psychology” (Hunefeld & Brunetti, 2004) and in fact he became professor of Experimental Psychology. Cognitive Science was firstly officially mentioned as a book title in Bobrow & Collins (1975). It was there defined as a new field that “includes elements of psychology, computer science, linguistics, philosophy, and education, but it is more than the intersection of these disciplines. Their integration has produced a new set of tools for dealing with a broad range of questions. In recent years, the interaction among the workers in these fields has led to exciting new developments in our understanding of intelligent systems and the development of a science of cognition.” (Bobrow & Collins, 1975, from the Preface).

A new Journal,

These first definitions seemed to point towards the idea of Cognitive Science (CS) as a single new science, rather than a field of cooperation between different disciplines on related topics. After all, the expression assumed the singular form and not the plural one (Cognitive Sciences). Bobrow and Collins assumed that common problems and tools could be recognized that ground such new discipline.

2. Is there a unique object for Cognitive Science?

In the following paragraphs, we shall first examine whether founding the unity of CS on these assumptions was justified, and then we will try to propose an alternative approach.

Let’s start from the unity of object. According to Collins’ definition, what unifies CS is having a “common set of problems”. This, in epistemological terms, is not tantamount to dealing with a common “scientific object”. In fact, problems come out from commonsense and science shares them with commonsense. They are questions asked by laypersons, sometimes answered or even solved using “nonscientific” methods (e.g. think of naïve physics, that gives some explanation of physical phenomena; the naïve explanation however may turn out to be wrong when the same “problem” is addressed inside a scientific perspective). Having to do with some particular set of problems, then, is not sufficient to give rise to a single discipline. Science usually emerges with the aim of accounting for “some phenomena” better than commonsense, or giving stronger reasons to agree on some explanations. But what makes such “phenomena” really shared as a common object? Various points of view, in fact, could carve out different scientific objects from everyday reality. Normally, a certain field of knowledge becomes an established scientific discipline when a community of researchers comes to share criteria about some acceptable vocabulary, statements, procedures, and protocols, to be applied to commonsense evidence. This would be the case also with CS if some unifying criteria could be identified.

A possible unifying factor could be that CS is the

Currently, such a definition is not so straightforward, because there is no uncontroversial characterization and endless debates still concern it (see for example “Topics in CS”, 2009). However, defining cognition did not appear to be a problem during the “good old-fashioned” days of cognitive science. This was because a standard interpretation of what has to be meant by “cognition” was available. As a matter of fact, most of the early cognitive scientists actually believed that

Cognitive science, then, at its inception was very close to the cognitivist perspective, which worked as a unifying umbrella for different disciplines. But now things have changed. If we turn to the present landscape, among the various attitudes towards cognition, we find three main approaches that still consider CS as a unique discipline on the basis of an alleged common definition of cognition: one that keeps itself faithful to the original computational- cognitivist approach, one that equates the concept of cognition with “mind” and another one that equates it with “brain”.

The first perspective is based on the assumption that cognition is computation. But beyond the discussion about whether this view of cognition is explanatorily adequate (see this Journal, n. 12-4 issue, e.g. Chalmers, 2011; Towl, 2011), here we consider the position according to which the computational approach is the best

A second, popular unifying perspective is to consider cognition as

Unfortunately, however, mind is not a clearer concept than cognition itself. For example, some scholars associate the term “mind” with conscious experience, but psychoanalysts are not the only ones who believe that many interesting “mental” phenomena happen outside of the consciousness. On the other hand, there is no satisfactory theory about what conscious experience is. Also, and this is perhaps the most problematic aspect, the term “mind” can readily reveal an implicit dualism as opposed to body and biological processes. Moreover, some argue that even “mindless” organisms may exhibit some properties that may be defined “cognitive”, so the lowest bound of cognition (the “minimal cognition”) is uncertain (see e.g. Van Duijn et al., 2006).

A recent trend, more and more popular, is in some way specular to the “mind” stream, and consists in considering cognition as the product of brain and neural activity. According to some folk perception, CS is even tantamount to cognitive

One different view, that could offer a solution to the problem of defining cognition avoiding the shortcomings of the computational approach, is the dynamical systems standpoint (Schöner, 2007). Dynamical systems (including connectionist ones)1 are in fact gaining more and more credit. Proponents of this approach consider it more suitable for expressing the complexity of phenomena that should be investigated by cognitive science, where brain and body are involved along with mind, natural and social environment, etc. Dynamical systems are flexible because they can take into account different properties of cognitive systems like stability, instability, and non-linearity (when small changes can lead to large consequences). So this approach is particularly suitable for producing accurate accounts of sensorimotor aspects (e.g. motor control, object grasping, etc.), and in general of “embodied” processes that involve a continuous and real time control. The dynamical systems approach has been gradually extended to visual working memory and to some aspects of verbal learning, but unquestionably what is interesting when talking about cognition are high-level phenomena. Even if the dynamical systems approach promises to be usable for higher-level phenomena accounts (Spencer, Perone, & Johnson, 2009), its definition of cognition still seems placed at a low level. The need for integrating high and low perspectives is recognized but there is no clear and commonly accepted method to achieve this result. So dynamical systems do not qualify either as a candidate approach for a unifying perspective in CS.

An additional perspective that is becoming more and more popular is the one that considers cognition as situated-embodied action. One example is Glenberg (2010), who believes that different perspectives can be unified because they all take “body” into consideration. The strongest approach is the one called “enactive” or “autopoietic” (i.e. considering cognitive systems as autonomous and self-organizing) and is a further candidate as a unifying perspective because it strives to apply the same conceptual and methodological framework to a wide range of phenomena, going “from cell to society” (Froese & Di Paolo, 2011; Stewart et al., 2011), so having a multidisciplinary nature. In this perspective, cognition is not a representational process, but a process of “sense-making” during a dynamic interaction with the environment (de Bruin & Kästner, 2012). In the widest sense, cognition=life (Stewart, 1996; Thompson, 2004, 2007). But enactivists have to show how “an explanatory framework that accounts for basic biological processes can be systematically extended to incorporate the highest reaches of human cognition” (De Jaegher & Froese 2009, p. 439) i.e. to bridge the gap from life to mind, what De Jaegher and Froese called the “cognitive gap”. Up to now, there is no convincing proof that such approach can explain

1Connectionist systems, that are very popular, can be considered a particular case of dynamical systems (van Gelder, 1995), having some additional features that are not shared by all dynamical systems (a large number of homogeneous units, activated as a function of the weighted sum of activation of other connected units).

3. Is there a unique method for Cognitive Science?

Let’s now briefly consider whether it is really appropriate to take supposed common methods as the unifying ground for CS. Bobrow & Collins (1975) and Collins (1977) introduced the use of a common set of tools (or methods, in epistemological terms) as a unifying factor for CS. Among these tools, Collins (1977) mentioned analysis techniques and theoretical formalisms. As examples of analysis techniques he mentioned protocol analysis, discourse analysis, and experimental techniques coming from cognitive psychology; as examples of theoretical formalisms he mentioned means-end analysis, discrimination nets, semantic nets, production systems, ATN grammars, frames, etc.

It may be easily observed that some of the analysis techniques and theoretical formalisms mentioned by Collins are now outdated and, in any case, it would be hard to say that they have become used as a common set of tools by

Rather, methods actually used by cognitive scientists are those that they normally use inside their background disciplines. It would be difficult to ask a neuroscientist to be familiar with discourse analysis or a psycholinguist to be able to properly read a brain scan. Thus, it seems fair to say that so far no universally accepted set of methods emerged that characterize CS as a single discipline.

4. Cognitive Science as a multidisciplinary endeavor

Bringing the whole cognitive science to a single disciplinary perspective or a single unifying principle seems therefore impossible in the present state of things. As I have previously pointed out, science tries to account for commonsense problems by transforming them into scientific “objects” when an agreement is established in a community of scientists about a set of acceptable protocols, statements, procedures, etc. This does not appear to be the case with CS, since as we have seen there is no accepted notion about what cognitive processes are and, also, specific methodologies of this discipline have never been established. Cognitive scientists, in fact, tend to take as objects of study of CS what they normally investigate in their own background disciplines: subjective experiences if they are philosophers, brain activations if they are neuroscientists, information processing if they are cognitive psychologists, and so on. And they use their own methods. There is no true fusion of different disciplines into a genuinely new one. For this reason, we claim that CS is best considered as a

We must admit that different disciplines involved in CS actually seem to talk about different things (representations, computations, qualia, concepts, brain activations, connection weights, etc.) and do so using different languages. Should we then give up in defining the cognitive science field and should we say that CS is a nonexistent science? This would be a rather extreme conclusion. We can pragmatically recognize that there must be a reason why scientists belonging to different research areas are induced to join under this label, to publish journals, and to meet at conferences. If the unifying factor cannot be found on a truly shared scientific object or method, there can be nonetheless a strong pragmatic factor.

Cognition and mind are abstract terms that seem to refer to “entities” but we do not have such things like we have a dog or a car (Trigg & Kalish, 2011). Rather, such terms refer to regularities, “laws” that explain abilities, performances, behaviour. As a matter of fact, all cognitive scientists share the quest for mechanisms that explain intelligent behaviour, in all forms, i.e. in humans, animals, and machines. Cognitive systems and intelligent systems are actually used as synonymous terms. Of course, there is the risk to rebound the discussion to another poorly defined term, “intelligence”. As is well-known, in AI is intelligent what for

The true endeavor faced by cognitive sciences concerns how to cooperate, how to sort different perspectives, methods, and languages. A pragmatic way to do this is considering first of all a certain issue that emerges from the common sense and that needs explanation. The most typical issues concern particular cognitive tasks. If a community of scholars is able to reach an agreement in considering this issue as an acceptable problem for cognitive science, then the way is open for collaboration. But the problem remains of “putting together”, comparing, and integrating different views.

5. From a cognitive system to a meta-theoretic System: A Proposal

In pursuing the way leading towards a unified perspective, Greco (2006) proposed that a possible solution would be to consider different accounts as talking about something more general than special aspects of cognition, namely about the unique “fact” present in the common sense perspective, which needs to be explained (e.g., a task). The operation of a cognitive system (i.e. a system that uses or manages knowledge) during a task may be then described differently according to different standpoints. Some instances are:

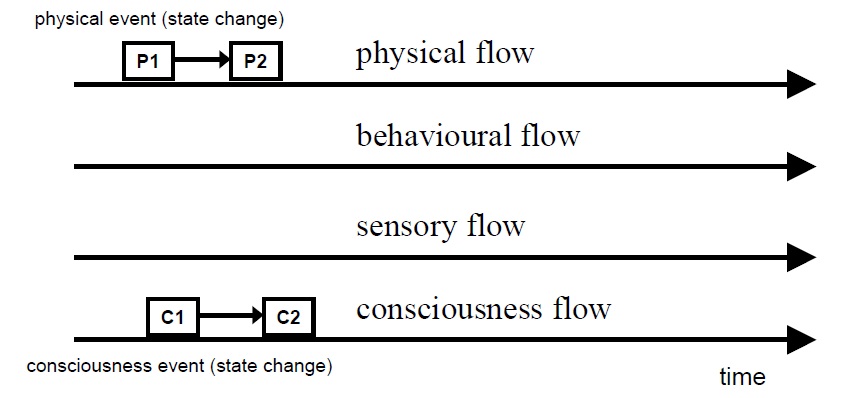

The commonsense idea that a “fact” does not happen in a single time is captured by the notion of “process”, so widespread in cognitive science. A process encompasses different states that a system may assume during the time course, and transitions between them. A new state, as a snapshot, can be identified only when the previous state changes: we call “event” this change of state, and “flow” a sequence of events in time. Cognitive flows are a sequence of changes of cognitive events in a cognitive system.

Taking

In our system, there are only descriptions so far. But the purpose of scientific enterprise is to attain explanations. Explanations take place by considering the

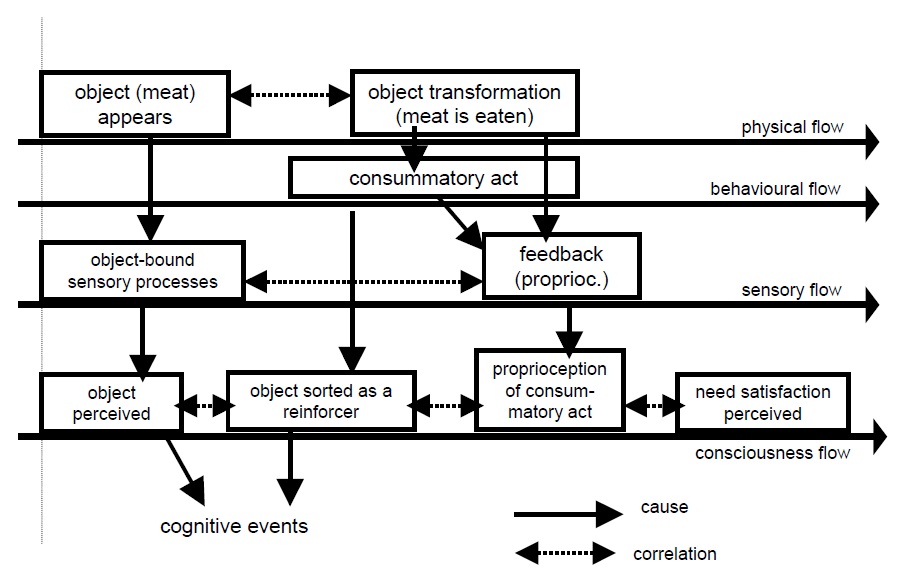

A simple example can explain this point. Let’s take, for the sake of argument, reinforcement in classical conditioning (Figure 2), even if – given its almost automatic nature – many would not consider it as a true cognitive phenomenon. Different disciplinary

The point here is that disciplinary descriptions (horizontal readings of the flow-chain) may not be sufficient for explanation but an added-value may come from trans-disciplinary descriptions (vertical readings). In our simple example, if one stays on the physical flow only, and thus takes into account only descriptions like “the meat was available” and “the organism ate the meat”, these two statements would appear just correlated, but not causally linked into a coherent explanation. A sequence of event descriptions that are available in other flows (then made in other disciplinary languages) may help better understanding what happens. The appearing of meat and its being eaten cannot be explained only in physical terms, but explanation may be easier if the event description is enriched introducing expressions like “the meat was perceived”, “it was considered as a reinforcer”, or even introducing mediating cognitive events, and so on.

Some vertical links may be treated as correlations and not as causations: for instance, eating in the behavioral flow is likely accompanied by food processing in the physiological flow. Sometimes events “happen” together by chance, other times there is a common factor that explains correlation: this may be made clear in our system and the concepts of different disciplines are used only when they contribute to an overall explanation. For example, the physiological events of digestion need to be mentioned only if they have some role in the phenomenon that is being explained (food absorption may be considered relevant in order to make “eating” work as a reward).

Please note that different flows are not different “levels”. In my view, the “level” metaphor is not a good ground for distinguish between different cognitive disciplines. At a first sight, some disciplines seem to deal with low-level processes (like sensory, brain, hardware, etc.) and others with high-level ones (like concepts, expectations, motivations, qualia…). However, it is not obvious that even disciplines that are at the same level make compatible descriptions, because disciplinary languages are anyway different and often there is no clear translation. Philosophers may speak of “propositional attitudes” where psychologists speak of “mental models”, neuroscientists may speak of “striatal dopamine level” and connectionists of “vectors in activation space”. Hence the distinction between levels seems to be of little use from the point of view of multidisciplinary cooperation. In the system here proposed, there is no need to translate one language into another, but only a

The further question arises of which of possible alternative descriptions is the “good” one, viz. the one that gives the best explanation. One of the most frequent cases is when alternatives concern subjective reports and neural descriptions. My answer is that we almost never face a single explanation, but the most suitable choice is the one that best fits according to some particular purposes. Often such descriptions concern events that cannot be eliminated without losing explanatory power. On the contrary, simply enabling conditions may be usually eliminated unless they are “critical” for some phenomenon to happen. As an example: the presence of neural processes in some part of the brain is an enabling condition for virtually every cognitive process, then mentioning it does not add any value to our explanation; but showing what the particular brain location is, or a particular fault in the neural process, can be critical in understanding something that in the behavioral or consciousness flows alone cannot be explained.

The meta-theoretic system that I have presented is an attempt to show how cooperation between disciplines can be considered not only possible but also necessary. In my view, a true interdisciplinary cooperation (which is the essential core of cognitive science) is not a simple recognition of what others are doing or a simple “be kept informed” about questions that could be relevant about cognition, as often seems to happen. I think that the fundamental point is the need to specify