Information retrieval (IR) has emerged as a central technology in modern society by enabling individuals to extend their ability to discover and obtain knowledge. The quality of queries has a profound impact on retrieval performance because users must formulate their information needs into queries. Queries that produce low retrieval performance on most IR systems are called

With the ongoing debate over the benefit of query disambiguation on IR, this study revisits the issue with a new approach that will segment a keyword query into topics and resolve ambiguity only at topic level. The motivation of the study is to examine whether query disambiguation is helpful to IR with the latest developments in machine learning and new knowledge resources such as Wikipedia. In particular, this study focuses on the following research questions,

Does query disambiguation improve retrieval?

Do the structural features of a Wikipedia entry (e.g., title, hyperlinks) offer an effective means of establishing context for topic detection and query disambiguation?

with two corresponding hypotheses:

H1. There is no difference in performance between retrieval runs with query disambiguation and baseline retrieval runs without query disambiguation.

H2. There is no difference in performance between retrieval runs with query disambiguation based on Wikipedia knowledge and retrieval runs with query disambiguation based on free text.

The rest of the paper is organized to provide details on the investigation of both questions starting with a brief review of the existing approaches

1 The average length of a web query is 2.4 terms and important terms that are descriptive of the information needed are often missing from these short queries (Spink, Wolfram, Jansen, & Saracevic, 2001)

Methods for WSD are characterized based on the approach they adopt to acquire word meanings. Approaches that adopt predefined word senses from existing knowledge structures such as dictionaries, thesauri, and Wikipedia are considered to be knowledge-based, while approaches that extract word sense information from the underlying collections are considered to be corpus-based.

Corpus-based WSD approaches derive knowledge from corpora using machine learning algorithms. Researchers who rely on this approach consider word sense disambiguation a straightforward classification problem that attempts to determine the category of a context based on models learned from examples. A Bayesian algorithm has been widely adopted for corpus- based WSD. Gale, Church, & Yarowsky (1992) applied the Naive Bayesian approach for WSD using a bilingual corpus for training. They selected as the context the 50 words surrounding an ambiguous word. The correct sense was then determined by selecting the sense with the highest probability based on the context. The authors found six words in the corpus that had only two senses (e.g.,

There is a growing trend to use Wikipedia2 as the sense resource for disambiguation because it provides not only a huge lexicon (i.e., the current English version contains about four million articles) but also extensive descriptions of each word sense. Mihalcea and Csomai (2007) disambiguated terms that appeared in text by mapping them to appropriate Wikipedia articles. The whole process involved two steps: term extraction and word sense disambiguation. In the first step, terms were ranked by their likelihood of becoming hyperlinks in Wikipedia, and only those terms with likelihoods that exceeded a predefined threshold were chosen for disambiguation. For word sense disambiguation, the authors derived word senses from hyperlinks found within Wikipedia articles in order to create training data for supervised disambiguation. For every hyperlink in a Wikipedia article, its author must select the correct destination (i.e., another Wikipedia article) that represents the sense of the anchor text. For example, the term

Medelyan, Witten, & Milne (2008) developed another disambiguation approach using Wikipedia as a knowledge resource. They used Mihalcea and Csomai’s (2007) strategy of collecting word senses from links in Wikipedia articles. To disambiguate a polyseme in the text, the surrounding terms that were unambiguous Wikipedia anchors (i.e., links to only one Wikipedia article) were then chosen as contexts. The disambiguation process was carried out by calculating semantic similarity to the contexts and conditional probability for the polyseme. Semantic similarity was calculated as the average of each candidate article that represented a sense of the polyseme to all context articles. Conditional probability was computed from counts of Wikipedia links; for example, because the word

The latest development in query disambiguation research emphasizes the integration of natural language technology and knowledge bases. Selvaretnam and Belkhatir (2012) proposed a query expansion framework considering sense disambiguation as an essential step. In particular, the authors suggested that syntactic parsing such as part of speech recognition should be applied at first followed by semantic sense disambiguation. The decision of whether a sense is appropriate will depend on the relatedness of that sense to its occurring context measured in an external knowledge base such as WordNet. Similar to this study, Klyuev & Haralambous (2011) investigated query disambiguation in the context of improving query expansion, with their finding indicating that combining multiple knowledge bases such as WordNet and Wikipedia could bring better retrieval results. The authors also suggested that selecting the Wikipedia articles that are closely related to the query is vital to the success of query expansion, which affirms the importance of query disambiguation if one query term has multiple matched Wikipedia articles.

Lack of context deepens the ambiguity problem for weak queries. Most current disambiguation approaches demand 20 to 50 context words to produce a relatively reliable prediction. However, weak queries are unable to provide that much context because they usually contain only two or three words. A common solution is to enrich a short query with query expansion techniques such as pseudo relevance feedback and web expansion. The challenge when using these strategies is how to overcome the impact of “noisy” words due to inaccurate query expansion. Both query expansion and query disambiguation can benefit from operating at the level of the topic instead of the word. Query expansion at the topic level will reduce error due to query drift and lead to higher retrieval performance (Bendersky, Croft, & Smith, 2009). One major problem for query disambiguation is how to find the appropriate context for a polyseme. Using query topic detection, it is likely that a polyseme and its most revealing context will be grouped in the same segment based on co-occurrence patterns, and this will help a software agent to improve its disambiguation accuracy (Navigli, 2009). Recognizing boundaries and the correct meanings of query topics is an important step towards understanding a user’s search intent and improving the retrieval accuracy of weak queries. The unsupervised approach is efficient, but it has a high error rate. One solution that could lower the error rate would be to use external knowledge for guidance. The research reported here explored this direction by developing an approach to unsupervised query segmentation that utilized the knowledge in Wikipedia to achieve better performance on boundary recognition.

2 http://www.wikipedia.org/

3.1. Topic Detection with Language Model

The first challenge for topic-based query disambiguation is to develop an approach to recognize topics, such as phrases and named entities, from user queries. Given the success of the statistical language model (LM) with tasks involving natural language, including IR (C. X. Zhai, 2008), this study developed an LM-based approach as a solution for the challenge.

Each keyword query will be split into complementary pairs of n-grams, or “chunks,” as input for the topic detection process. For instance, the query

The topic detection algorithm first expands the query with textual contents from the Web to address the problem of lack of context, which is common in user queries. In particular, a Google search can be performed using the original query,3 and the textual content extracted from the first 20 results of the search can be used to provide context for the query. With this enriched context

Because

where

where

A serious problem imposed on LM-based approaches is data scarcity, which is worsened by the textual content because a few words occur frequently while many words appear rarely or are entirely absent in the document collection. Jelinek-Mercer smoothing, which has been proven to be effective for IR tasks (C. Zhai & Lafferty, 2004), is chosen as the method to estimate a “discount” probability to words that are not present. With Jeliner-Mercer smoothing,

where

and the background model

In summary, the generative probability of a query topic

and the candidate (i.e., query chunk) with the highest probability will be chosen as the query topic.

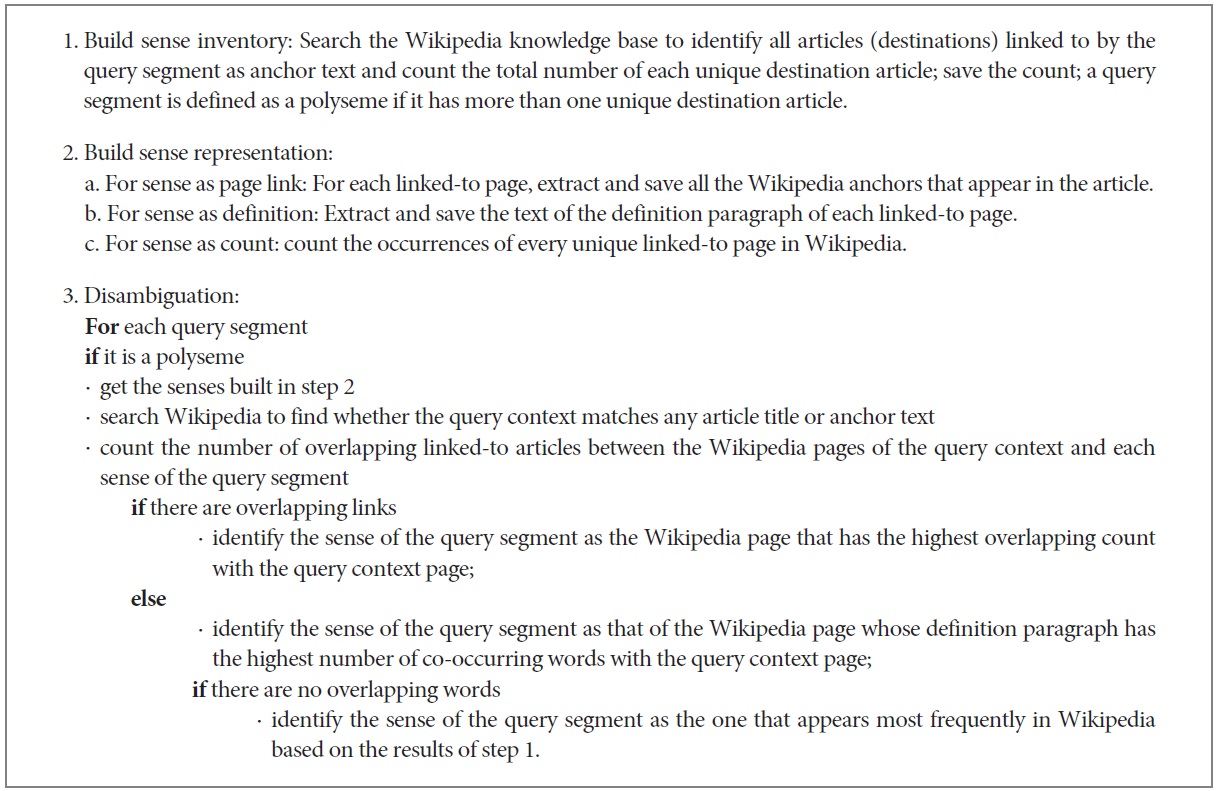

The first task of word sense disambiguation is to build what is known as a “sense inventory” containing all the possible meanings for each polyseme. Following the strategy of Mihalcea and Csomai (2007), the approach adopted here collects word senses as hyperlinks (i.e., anchors) in Wikipedia articles. For many hyperlinks in Wikipedia the author manually annotates the intended meaning of an anchor by linking it to a relevant Wikipedia article. Therefore, the sense inventory for any polyseme can be derived by extracting link destinations from all hyperlinks associated with the polyseme. Using this approach, five senses were identified for the polyseme

The disambiguation approach used in this research is known as a

3.3. Topic-based Query Expansion

One major problem related to weak queries is the failure to respond to required aspects of a user’s information need (Buckley & Harman, 2004); but adding inappropriate expansion terms to a weak query can lead to the problem of query drift and may reduce the performance of query expansion (Ruthven & Lalmas, 2003). To address problems of query drift, this research introduces a query expansion method that would provide for robust retrieval by exploiting the query topics detected in the disambiguation process.

The query expansion method used here relied on terms identified both in Wikipedia and on the Web in order to harvest evidence from two different types of content. Wikipedia is considered a structured resource

because the full text of each article was excluded from the process of query expansion: Given that expansion terms were extracted only from the title of a Wikipedia article and its definition paragraph (i.e., the first paragraph of a Wikipedia article) based on the frequency, they are not only concise and accurate but also provide a relatively small vocabulary. In contrast, even though expansion terms extracted from the Web would include terms that were potentially irrelevant, the fact that they are harvested from a large vocabulary and can therefore address aspects of the topic missing in Wikipedia articles was considered an advantage. Thus, this approach is able to exploit the advantages of both sources to offset the weakness of each. The technique chosen for Web-based query expansion is local context analysis (LCA), which selects concepts based on their co-occurrence with query terms and their frequency in the whole collection.

3 https://www.google.com/

4 The first paragraph of a Wikipedia article that is mandatory in order to provide a brief summary of the subject.

The test collections used in the experiment are AQUAINT and Blog06. The AQUAINT corpus is distributed by the Linguistic Data Consortium (LDC) and has been used in TREC competitions as the test collection for both the HARD Track and the Robust Track in 2005.5 The AQUAINT corpus consists of 1,033,461 news stories taken from the

This research used

4.2. Retrieval with Query Expansion

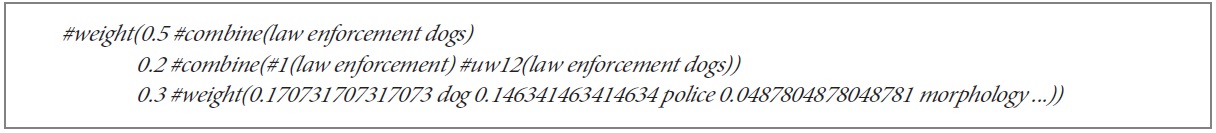

The process of query expansion used the detected query topics as input and produced as output a list of

weighted expansion terms for each query. The expansion terms were based on the detected query topics and were selected from both the Web and Wikipedia. The final version of the transformed query was formulated according to the Indri format and included three components: the original query, the detected query topics, and the top 20 expansion terms as determined by the weighting. The query topic component was constructed according to the following rules: If topics were detected in the query, then each topic would be formulated as an exact match, and all words in the original query would be included in an unordered match within a window of 12 words (i.e.,

4.3. Query Disambiguation Experiment

The first of these experiments (referred to hereafter as experiment #1) investigated the effect of query disambiguation on IR by comparing the retrieval performance of ambiguous queries with and without resolving topic ambiguity (i.e., H1). Four ambiguous queries from the Blog collection and ten ambiguous queries from the HARD collection were selected based on the criterion that either the query or, at minimum, one topic in the query was a Wikipedia hyperlink referring to one or more Wikipedia articles. For example, the string “black bear” could refer to a minor league baseball team or to a North American animal species. Query disambiguation effects on IR for ambiguous queries from the Blog and HARD collections were analyzed using four different query treatments:

4.4. Wikipedia for Query Disambiguation Experiment

The other experiment (referred to hereafter as experiment #2) investigated the hypothesis that using Wikipedia structures for query disambiguation would lead to better IR performance (i.e., H2). IR performance was measured by comparing the retrieval performance produced with two disambiguation approaches: results obtained by using Wikipedia knowledge and results obtained by using only textual context. Experiment #3 used only ambiguous query topics that had more than one sense representation derived from Wikipedia. For each ambiguous topic, this experiment attempted to identify the correct sense using two types of contexts: Wikipedia hyperlinks and free text (i.e., terms appearing in the first paragraph of the associated Wikipedia article). The assumption was that the two types of contexts would lead to significantly different disambiguation results (i.e., would not resolve to the same Wikipedia article). If a Wikipedia article was found, the polyseme under consideration would be considered a valid topic and the Wikipedia article would become the source of query expansion terms; if a Wikipedia article was not found, the polyseme under consideration would be treated as text without query expansion.

Queries from the Blog and HARD collections were disambiguated using knowledge at two different semantic levels: Wikipedia and free text. These experiments used four different query treatments:

wsd_qe_wiki10 represents query disambiguation (wsd) with Wikipedia knowledge (wiki) such as hyperlinks in the articles using the Wikipedia article that expresses the correct sense as the source for query expansion (qe) terms; if no associated Wikipedia article was found, the original query was used for retrieval without expansion;

wsd_qe_nowiki represents query disambiguation with text context (i.e., expansion terms acquired by Local Context Analysis from Google search results, or nowiki) to identify word sense using the Wikipedia article that expresses the correct sense as the source for query expansion; if no associated Wikipedia article was found, the original query was used for retrieval without expansion;

wsd_ir_wiki represents query disambiguation using Wikipedia knowledge such as hyperlinks in the articles to formulate the disambiguated query segment as a topic for information retrieval (ir); if no associated Wikipedia article was found, the query segment in consideration was treated as text;

wsd_ir_nowiki represents query disambiguation using text context (i.e., the rest of the query) to identify word sense and formulate the disambiguated query segment as a topic for information retrieval; if no associated Wikipedia article was found, the query segment was treated as text.

Retrieval performance was measured by MAP in both experiments.

5 The goal of the High Accuracy Retrieval from Document (HARD) Track is to achieve high accuracy retrieval from documents by leveraging additional information about the searcher and/or the search context captured using much targeted interaction with the searcher. The goal of the Robust track is to improve the consistency of retrieval technology by focusing on poorly performing topics.

6 A permalink in the blogosphere is a blog post that has a unique URL, which enables visitors to find it even if the post has been moved.

7 http://ir.dcs.gla.ac.uk/wiki/TREC-BLOG#head-c84eaf4868470d38ed1815b77e8fba909de21f54

8 The KL-divergence language model was chosen as the retrieval model for Indri.

9 For instance, the disambiguated segment Whole Foods in the query Whole Foods wind energy will be formulated as a topic in Indri query #combine(# 1(Whole Foods) #1(wind energy)).

10 The experiment wsd_qe_wiki follows the same procedure as outlined in wsd_qe in section 4.3; the additional suffix of _wiki is used to highlight the source of knowledge, which is the focus of the experiment. The same naming patterns also apply to wsd_ir_wiki.

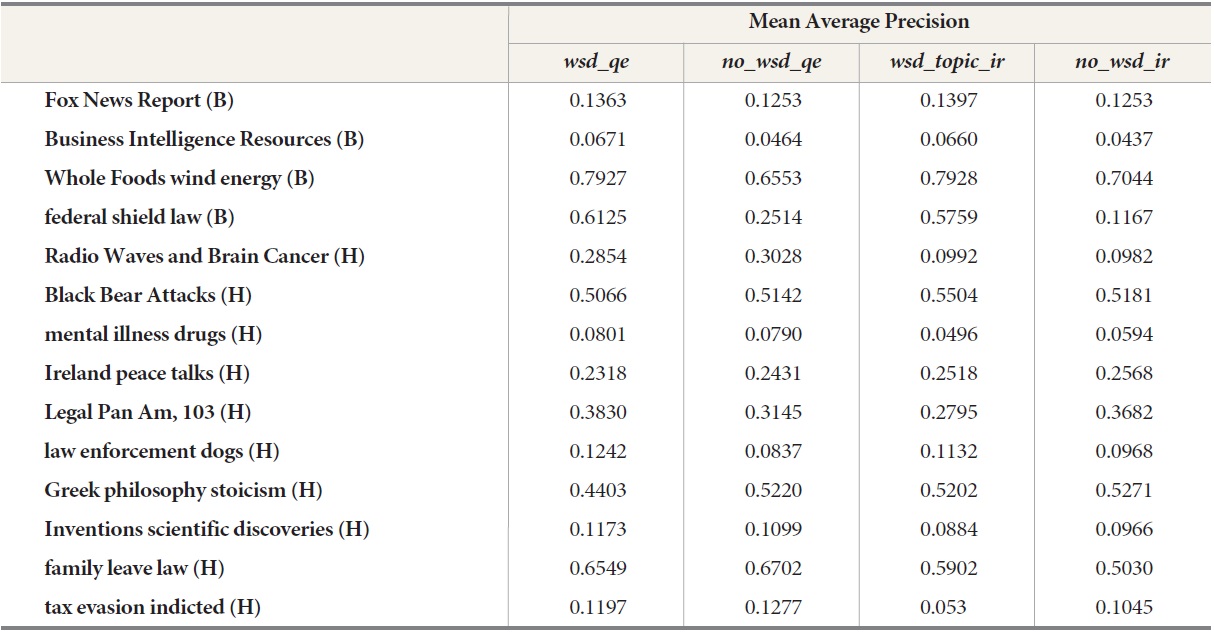

5.1. Query Disambiguation to IR Improvement

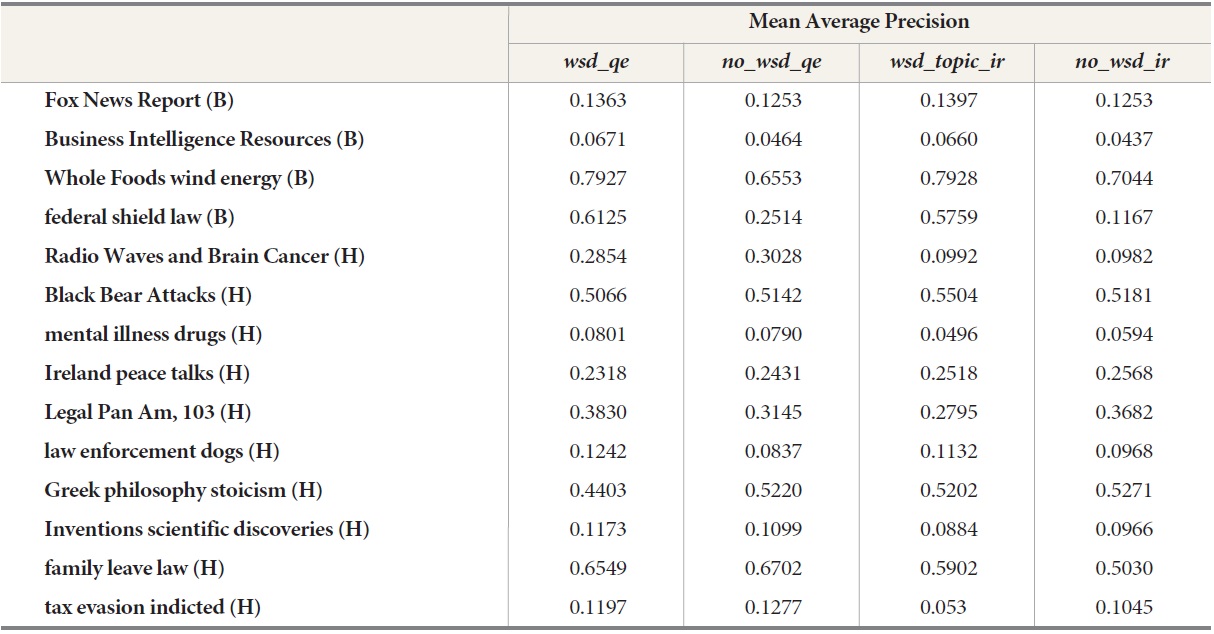

A summary of the results for retrieval with and without query disambiguation are provided in Table 1. The Wilcoxon signed ranks test performed on the results did not reject the null hypothesis (H1) that query disambiguation has no significant effect on retrieval performance for either the Blog queries or the HARD queries. For ambiguous queries from the Blog collection, Wilcoxon tests for two-tailed significance conducted between

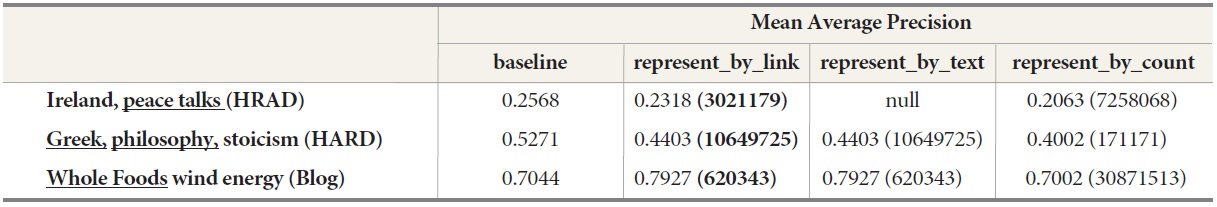

Query disambiguation effects on IR for ambiguous queries from Blog (denoted B) and HARD (denoted H) collections

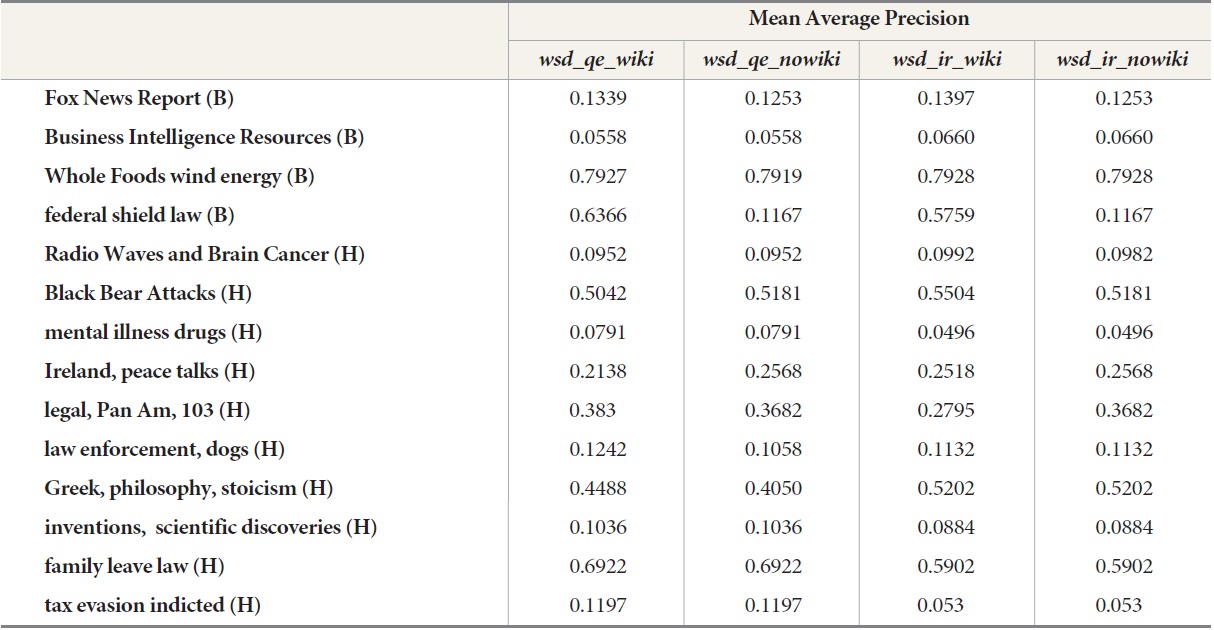

5.2. Wikipedia Effect on Query Disambiguation

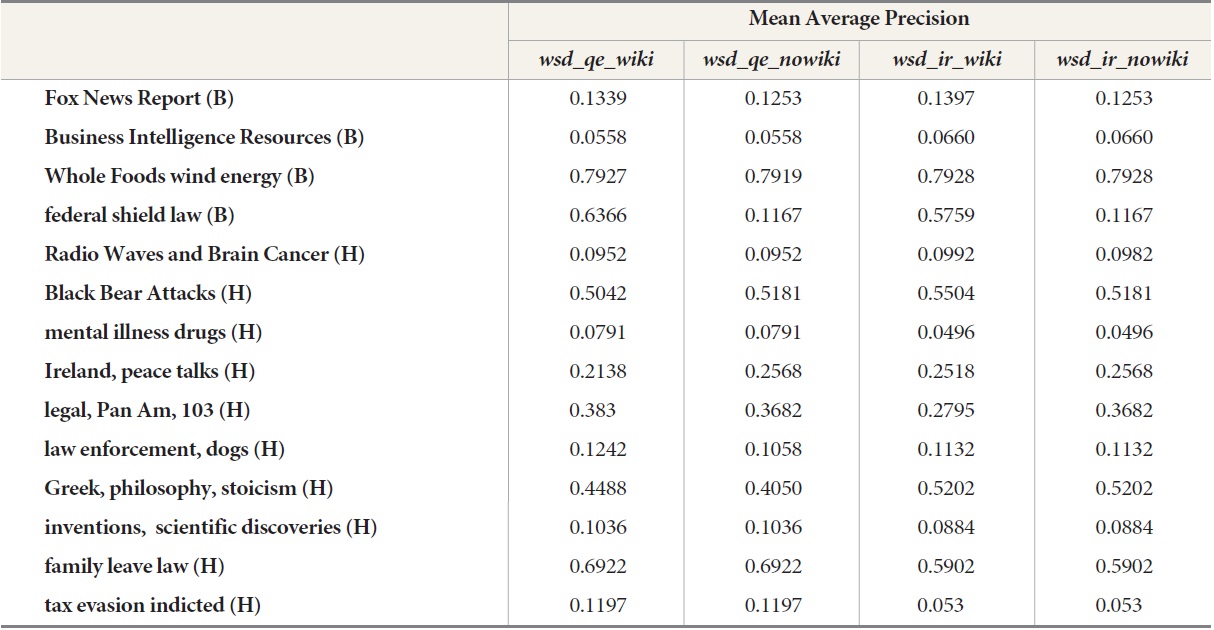

Retrieval results for queries from the Blog and HARD collections are listed in Table 2 when disambiguation is carried out using Wikipedia knowledge or free text. For both the Blog and the HARD queries, Wilcoxon’s signed ranks test did not reject the null hypothesis (H2) that using Wikipedia knowledge had little effect on query disambiguation. Comparison of the

Experimental results indicate that both of the null hypotheses regarding query disambiguation should be retained: Query disambiguation has little impact on IR; and the use of knowledge in Wikipedia documents

Effects of using Wikipedia knowledge for disambiguation of queries from Blog (denoted B) and HARD (denoted H) collections

does not produce significant improvement in disambiguation accuracy. Furthermore, this result is found for queries from both the Blog and the HARD collections.

One of the major motivations for this research is to re-examine the ongoing argument regarding the usefulness of query disambiguation in IR in light of relatively new knowledge resources such as Wikipedia. As has been pointed out in the IR literature (Harman, 1992; Salton & Buckley, 1990), disambiguation accuracy and quality of the sense inventory have been thought to make major contributions to disambiguation effects on IR. Given the experimental results indicating that the null hypotheses regarding query disambiguation should be retained for both query collections, it is helpful to examine the influence of each of these two factors in the context of the current findings.

6.1. Correlation between Disambiguation Accuracy and IR Performance

Query disambiguation accuracy has been claimed to be the most important factor affecting the effectiveness of disambiguation in IR (Buckley, Salton, Allan, & Singhal, 1995; Salton & Buckley, 1990). Based on the commonly held assumption that low accuracy in the resolution of polysemes will hurt retrieval performance, an experiment was designed to examine whether there was a correlation between disambiguation accuracy and retrieval performance: For an ambiguous query term, each of its word senses (i.e., a corresponding Wikipedia article) was represented by three features: anchor links that appeared in a Wikipedia article; the text in the first paragraph of an article; and the count of each sense appearing in Wikipedia. The hypothesis was that there would be discrepancies in disambiguation accuracy across the different sense representations, and the goal was to test whether retrieval performance would be significantly affected by ineffective query disambiguation.

Only one query from the Blog collection (i.e.,

Retrieval performance of disambiguation accuracy measured as MAP compared to baseline. Wikipedia page ids are indicated in parentheses and correct Wikipedia page ids are presented in bold

an impact on retrieval, as shown in Table 3.

To examine the impact of query disambiguation accuracy on retrieval performance, three statistical significance tests (i.e., t-Test with paired samples) were carried out on the results listed in Table 3:11 baseline vs. query expansion based on the correctly disambiguated Wikipedia page, which is in bold; baseline vs. query expansion based on the incorrectly disambiguated Wikipedia page; and query expansion based on the correctly disambiguated Wikipedia page vs. query expansion based on the incorrectly disambiguated Wikipedia page. The resulting

6.2. Wikipedia Effect for Query Disambiguation

Any attempt at resolving natural language ambiguities will depend on the quality and scale of the sense inventory, the repository of terms, and the common meanings for each term. WordNet is an example of a sense inventory and has been used extensively for word sense disambiguation (Jing & Croft, 1994; Qiu & Frei, 1993). However, according to recent studies (Rada Mihalcea, 2003; Prakash, Jurafsky, & Ng, 2007), WordNet has certain major drawbacks that make it unsuitable for query disambiguation in IR:

Sense granularity is too fine: For purposes of logic reasoning, definitions in WordNet include very fine distinctions between word senses. For instance, the verb eat has the two senses take in solid food and eat a meal. While this fine sense granularity is obviously helpful in areas such as artificial intelligence, it is not necessary in IR.

Semantic connections are too complete: The number of relationships defined in WordNet produces a huge number of possible semantic connections between two words. Such a large number of semantic connections will overload the IR system, requiring longer processing time without increasing relevance.

The scale of WordNet is too limited: The latest version of WordNet (i.e., WordNet 3.0) contains a total of 155,287 words and 206,941 word-sense pairs.12 Such a limited scope is not adequate for modern IR applications.

Given the weaknesses of WordNet, researchers have been looking for other knowledge resources that could be used for query disambiguation. Because of its large scale and the richness of its content, Wikipedia is a primary candidate and was selected for this research motivated by the hypothesis that the coverage and currency of Wikipedia articles would improve query disambiguation accuracy. However, as indicated by the results presented in Table 2, it is evident that using the structural knowledge in Wikipedia (i.e., the anchor links) did not lead to significant improvement in disambiguation accuracy because user queries do not normally contain sufficient context to associate a query with an appropriate Wikipedia page.

To understand the failure of Wikipedia as a knowledge base for disambiguation, it is helpful to assess Wikipedia based on the same criteria that have been applied to WordNet:

Sense granularity: Word senses are indicated by article topics in Wikipedia. For instance, in Wikipedia, the word Bush has various senses ranging from a type of plant, to a surname, and even to an island because there is a Wikipedia article for each of these three senses as discrete topics. Each sense should have adequate context for disambiguation in natural language, which is a decided advantage of Wikipedia. However, sense granularity is still an important issue in Wikipedia because there is no oversight of or planning for the nomination and selection of topics. Anyone can add a new meaning for a word by contributing a new Wikipedia article for that topic; the only constraint on a new page is that it must follow Wikipedia’s guidelines for articles. In consequence, a disambiguation program could spend unnecessary processing time on senses that are rarely used or incorrectly identify a sense because terms expressed in the query are either undefined or over-defined in Wikipedia.

Semantic connections: In Wikipedia, polysemes are generally connected to meanings through one of two methods: through anchor links that point to an article associated with one sense of the term or through a so-called disambiguation page that lists all meanings associated with a term. Harvesting word senses from anchor links has two advantages over a disambiguation page: It associates a meaning within the language context where it occurs, and it offers a count distribution of sense usage in Wikipedia. Experimental results show that distribution of sense usage is effective in query disambiguation since the primary sense (i.e., the sense that appears most frequently) is usually the correct one. However, no matter which sense representation is used, query disambiguation will suffer from the weaknesses of sense granularity.

Scale: As of December 2012, the English version of Wikipedia contained more than four million articles, with new articles submitted every day. However, it requires significant effort both to extract the knowledge embedded in Wikipedia articles and to build that knowledge into a structural resource for applications such as query disambiguation.

Analysis of the problems associated with granularity, sense connections, and scale in both WordNet and Wikipedia indicate that query disambiguation demands a type of knowledge resource that is very different from what either of these resources offer. For instance, based on the observation that users will correct spelling errors, add context, or change words in original queries to improve retrieval results (Guo, Xu, Li, & Cheng, 2008), large-scale query logs available from commercial search engines could be used to extract a series of queries from individual sessions and build a knowledge base that would not only catch grammatical variations and misspellings but also semantic contexts such as synonyms and co-occurring terms that point to the same topic (i.e., the same document). It would also be possible to construct a sense inventory by harvesting click-through records from query logs. Such a knowledge base built from query logs would not only save the cost of creating definitions and samples manually but might also be more effective for query disambiguation. In fact, using welldeveloped data mining algorithms, a knowledge base generated from a large and diversified query log, would be a very special kind of mass intelligence produced by user collaboration on a common task.

11 If two sense representations yield the same disambiguation results, such as page id 10649725 for query Greek philosophy stoicism, only one result is highlighted in bold.

12 http://wordnet.princeton.edu/wordnet/man/wnstats.7WN.html

To overcome the challenge of ineffective user queries, this research implemented a query disambiguation approach that integrates topic detection and maps the detected topic to the most appropriate Wikipedia page. This research tested two hypotheses for query disambiguation in IR. The experimental results could not reject the null hypothesis that there was no significant difference in performance between retrieval with query disambiguation and retrieval without disambiguation. Furthermore, statistical testing did not support the hypothesis that representing word meanings with structural Wikipedia knowledge such as anchor links would significantly improve disambiguation effectiveness in IR compared to representing meanings with text. Both of these findings suggest that future knowledge bases of word meanings should favor defining word senses by harvesting language usage patterns, probably from large search engine logs, in order to maintain a rich level of diversified contexts for query disambiguation and optimization. However, because the test collections used in this research contained only a limited number of ambiguous queries,13 the validity of any conclusions regarding query disambiguation based on statistical analysis must be considered preliminary due to the small sample size.

13 These are four ambiguous queries in the Blog collection and ten queries in the HARD collection.